When scouting for future innovations in the financial industry, quantum computing is moving to center stage as one of the hottest technologies to watch out for. Slowly and steadily gaining momentum on its way from a theoretical concept to tomorrow’s next disruptive technology, quantum computing is already showing lots of potential in solving problems that are way out of reach for conventional computing.

Deutsche Börse Group is closely following new trends as part of its strategic focus on new technologies and exploring what is driving the industry now and in the future. They initiated an early pilot project to apply quantum technology to a real-world problem to better understand the technology and its applicability to our business. Deutsche Börse Group engaged JoS QUANTUM, a Frankfurt-based fintech company, to develop a quantum algorithm tackling existing challenges in computing our business risk models.

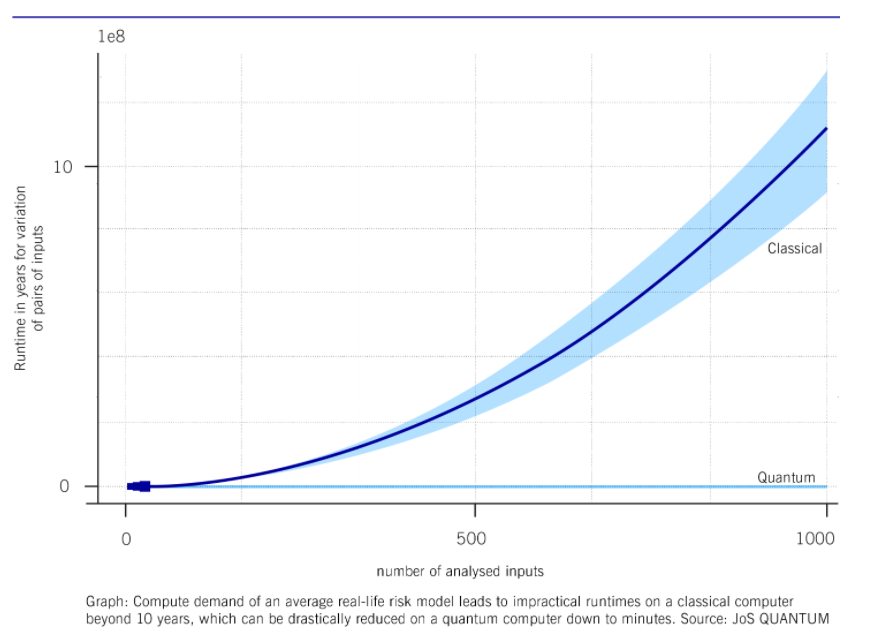

What is the problem to solve? Our risk models are used to forecast the financial impact of adverse external developments such as macroeconomic events, changes in competition, or new regulation. Today, computation is done via traditional Monte Carlo simulation on existing off-the-shelf hardware. Depending on the complexity of the model and the number of simulation parameters, computation time for risk models ranges from some minutes to a few hours or even some days. For a full sensitivity analysis of today’s model, the number of scenarios to analyze grows disproportionately and computation time jumps out of every realistic scope. We focussed on the speedup for up to 1,000 inputs, which would require up to 10 years of Monte Carlo simulation. In practice, calculations requiring more than a few days of computation time have no business benefit.

What is the approach? By introducing quantum computing, the aim of the project was to analyze if the total computing duration for a full sensitivity analysis can be reduced significantly, possibly from multiple years to below 24 hours. Furthermore, we wanted to gain insights about what kind of quantum computing power would be required to run such a full sensitivity analysis on a day-to-day basis and also to better understand the effort needed to code and run a quantum real-world use case.

Speed up calculation through quantum algorithms

A first step to calculate the sensitivity of the models’ simulated result was the variation of the input parameters of the business risk scenario. The to-be-expected speed-up has been simulated for a simplified model by applying an Amplitude Estimation (the quantum version of Monte Carlo simulation) combined with Grover’s algorithm (a quantum search algorithm), which enables quadratic speedup of calculations on quantum machines. This was then compared to the performance of the traditional Monte Carlo simulation results running on off-the-shelf hardware. In a second step, the results of the simplified model have been extrapolated to a model size of practical use. These results demonstrated that the application of quantum computing would drastically reduce the required computational effort and thus total calculation time. For the chosen benchmark of 1,000 inputs the “warp factor” is about 200,000, reducing the off-the-shelf Monte-Carlo computation time of about 10 years to less than 30 minutes quantum computing time.

The third step was to execute the model successfully on IBM’s quantum machine (vigo) in Poughkeepsie in New York accessed via the IBM cloud. This machine has today only a smaller number of Qubits, limiting the model size. Due to today’s hardware limitations, a smaller version of the model was run. The execution on the quantum machine allowed the team to verify the successful implementation of the risk model in quantum code. In addition, the hardware requirements for the respective number and quality of qubits has been evaluated to extrapolate which generation of quantum computing hardware will be required to run a full sensitivity analysis in production; this seems to be available already in a few years.

The results of the comparison between the classical, off-the-shelf computer performance and the quantum simulation and quantum measurements are shown below.

The results of the pilot project demonstrated that the expected speed-ups using quantum computing can be achieved. In addition, the team demonstrated the successful execution of a subset of the risk model on an IBM Quantum machine. They estimate the earliest availability of the minimum required quantum hardware for a full model calculation, which is only a few years out and comparatively modest. And this is what makes this project so special. Quantum hardware providers could and will possibly meet these requirements in the second half of this decade; meaning that a real-life application of quantum computing in risk management could only be a matter of a few years.

This could open new horizons with significant benefits: a much more sophisticated, detailed, and fast, but equally robust risk management model, enabling comprehensive sensitivity analysis. Quantum computing would deliver results on a rich model fast enough to be of practical use. Application of the same quantum-based approach of this pilot study to other areas of risk management can be thought of as well: credit risk and operational risk seem to be natural areas where more complex models would allow more precise predictions.