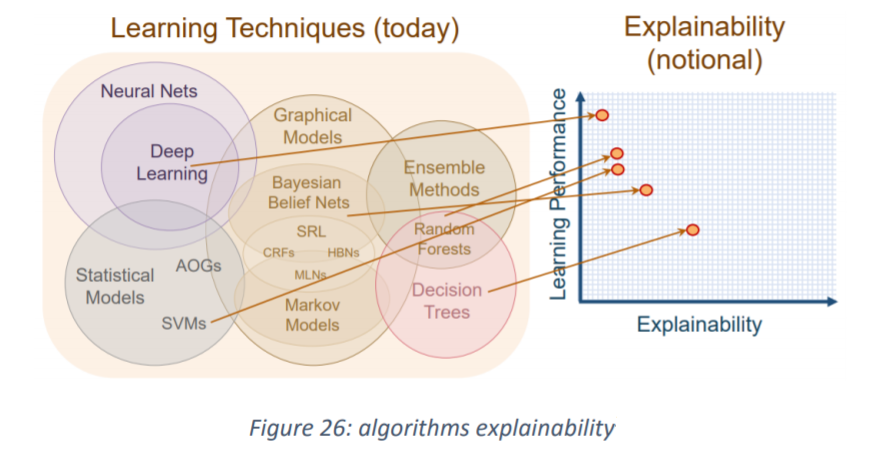

Institutions should implement measures to ensure explainability of their artificial intelligence and machine learning systems from the design phase, according to a recent publication from Commission de Surveillance du Secteur Financier (CSSF), Luxembourg’s financial watchdog. Even when full transparency cannot be achieved due to the intrinsic nature of the algorithm employed, one of the main weaknesses of deep learning, steps can be taken to identify and isolate in a human understandable format the main factors contributing to the final decision, the regulator added.

Other recommendations in CSSF’s white paper on artificial intelligence include topics such as: data quality, governance, and external sources, privacy; governance; skills; cultural change; bias and discrimination; accountability; auditability; safety; change management; model updating; IT operations; robustness and security; systemic risks; and external AI providers and outsourcing.

On the latter, CSSF said that institutions should evaluate the risks related to the maintenance of off-the-shelf packages and to the outsourcing of AI/ML development and maintenance activities. Adequate controls should be implemented in line with best practices and other regulatory requirements applicable to outsourcing.