Adversaries can attack artificial intelligence (AI) systems to make them malfunction. In January 2024, the National Institute of Standards and Technology (NIST) published voluntary guidelines on how to identify and mitigate these attacks. The guidelines are primarily intended for those who design, develop, deploy, evaluate and govern AI systems.

Now, NIST has finalized the guidelines. Adversarial Machine Learning: A Taxonomy and Terminology of Attacks and Mitigations, created with input from industry and academia, has a number of revisions that may interest AI developers and users.

These include:

- The section on generative AI attacks and mitigation methods has been updated and restructured to reflect the most recent developments regarding these technologies and how businesses are using them.

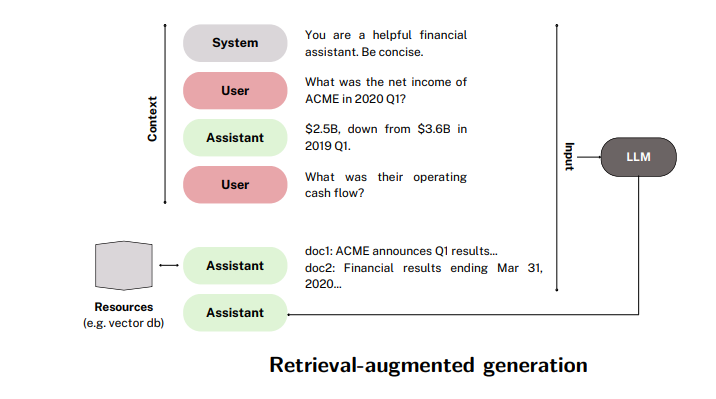

Note: an agent system may select from among a configured set of external dependencies and invoke the code with templates filled out by the LLM using information in the context. Adversarial inputs into this context, such as from interactions with untrusted resources, could hijack the agent into performing adversary-specified actions instead, leading to potential security or safety violations. Source: NIST

Note: an agent system may select from among a configured set of external dependencies and invoke the code with templates filled out by the LLM using information in the context. Adversarial inputs into this context, such as from interactions with untrusted resources, could hijack the agent into performing adversary-specified actions instead, leading to potential security or safety violations. Source: NIST - A new section, an index of attacks and mitigations, has been added to allow for fine-grain definition and navigation of attacks. This improves the usability of the guidelines and will promote efficient and consistent communication between practitioners and other stakeholders.