Big Data has taken over retailers, governments and social media, and it is now coming to securities finance. Securities finance has always had to contend with a substantial amount of data, but Big Data presents a new and emerging complication. The change ahead of us is how much more data is created, how much further data needs to travel, and what else needs to done with it. This is where Big Data really earns its name.

Big Data can have a variety of meanings, but at its core is the need to capture, analyze and manipulate a vast and rapidly changing array of information. The common theme of Big Data is that it is too big to manage without a robust technology infrastructure. There is a step up from what is called High Velocity Data, which includes managing real-time data for operational processing, to Big Data, which creates new challenges in the massive volumes created.

When considering how big Big Data can get, some data points help illustrate the trend:

- Worldwide data usage in 2015 is estimated at eight to nine zettabytes by multiple sources.(1) It is expected to reach 36 to 45 zettabytes by 2020. A zettabyte is equal to one trillion gigabytes.

- Big Data is now a $30 billion marketplace with an annual growth rate of 17 percent, according to Wikibon.(2)

- A recent Accenture survey found that 89 percent of business leaders thought that Big Data “will revolutionize the way business is done in the same way the Internet did”.(3)

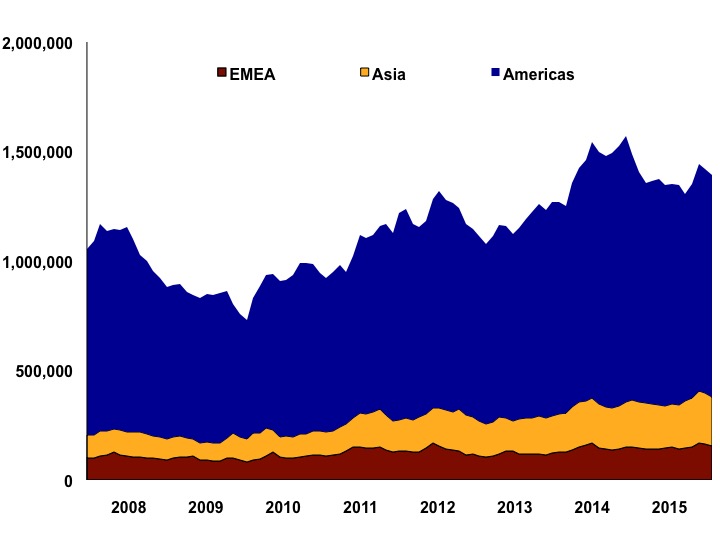

In securities lending, the volume of data being processed declined from 2008 to 2009. It then grew in fits and starts through 2015, according to FIS (see Exhibit 1). The greatest growth in data has occurred in North America: In 2008, these transactions were 63 percent of the global total, while in 2015 they were 72 percent. Europe’s market share also increased, from eight percent to 10 percent. Globally, however, the need to convert current data sets into a usable tool for informed decision-making is rising consistently.

Exhibit 1: Transaction volume data in securities lending

Source: FIS’ Astec Analytics

There are two major applications of Big Data in the securities finance space: reporting and analytics.

In reporting, a plethora of new regulatory mandates is driving the need for flexible and robust infrastructures for capturing data and submitting it to trade repositories. Each trade repository will have its own data submission requirements, making each market participant responsible for gathering and delivering data in an acceptable format.

On top of external reporting mandates, greater internal reporting and capital calculation requirements mean taking very large volumes of proprietary trade and market data, then creating standard, reproducible reports for business users. Parts of these reports will roll up to Treasury managers to make Liquidity Coverage Ratio (LCR) and Leverage Ratio calculations and to assign a capital cost to each transaction.

Big Data is also critical for transaction analytics. Already, high frequency traders are using Big Data to mine stock market activity and predict future directions. Some market participants in securities finance are working on analytics systems for in-house use by traders and computer algorithms. Big Data infrastructures speed up the capabilities of these analytics programs by allowing for more flexible manipulation. The less time it takes to compute reams of securities lending data, the faster a trader or liquidity manager can take action.

While Big Data analytics in securities finance is just beginning to take hold, there are already a number of potential widespread applications:

- Pricing tools for automatically generating rates and re-rates for financing transactions according to a combination of internal and external patterns. Many firms do this already but for General Collateral only; the growth of Big Data tools could make accurate, automated pricing more common for warm and hard to borrow securities as well. Transactions could be priced not just on the prevailing rate but also historical data analysis, including expected borrow term, counterparty risk and the turnover of the underlying account. Without sufficient automation to support ever more complex trade characteristics, the risks of missing revenue opportunities increases.

- An internal market model could show that collateral levels for a basket of equities or some High Quality Liquid Assets (HQLA) meet or exceed regulatory requirements based on dynamically changing risk factors. Big Data analytics would allow for a wide range of analyses and calculations in a short time period to not only satisfy regulatory requirements but ensure your operating margins are secure. Are your country, concentration and cliff risks covered adequately? How do you know?

- Clients may demand more granularity and intelligence in their analytics on a real-time basis. Big Data would allow for a robust computational analysis for each client on demand, rather than current reporting systems that rely heavily on static reporting

- Test for the optimum selection of cross-product, cross-platform solution. If the cost of repo financing is 20 bps on a CCP but 10 bps with a bilateral counterparty, and the cost of a futures product is only 5bps, then a dealer may want to pursue the futures for their financing needs. But they will need to know which client assets can be used most effectively, potentially subject to netting and term trade/evergreen capabilities, with the resultant effect on the balance sheet to factor into the price. Your systems need to be able to match multiple trade structures with an almost infinite combination of lender fund, collateral requirement, margin floor and credit limit variables and all in real time. Without the ability to cope with these situation, returns may fall and business move elsewhere.

Big Data represents both risks and opportunities. Without significant efforts to convert the vast arrays of data into actionable information, not only do you risk being left behind by your competitors, there is a very real chance that Big Data will simply swamp your business, creating confusion and misdirection. Making sense of the data available to an organization, whether you outsource to an expert like FIS or manage it in-house, has to be a prime objective in the modern data driven world. Now is the time to get in front of Big Data – and if your strategy and data partners are up to scratch, you will avoid getting run over by it.

David Lewis is Senior Vice President, FIS’ Astec Analytics

Footnotes:

(1) Alphacution, IBM and others.

(2) Wikibon Big Data Market Forecast, available at http://wikibon.com/executive-summary-big-data-vendor-revenue-and-market-forecast-2011-2026/

(3) How to achieve big success from big data, Accenture, April 2014, available at https://www.accenture.com/us-en/_acnmedia/Accenture/Conversion-Assets/DotCom/Documents/Global/PDF/Industries_14/Accenture-Big-Data-Infographic#zoom=50