Collateral optimization remains a pressing topic in financial markets. While institutions have made great progress in figuring out the models they use, accurate data aggregation and working through operational complexities remain a substantial challenge. The complications include tracking data both externally and internally, then how is it organized, characterized, viewed and ultimately fed into a collateral optimization process. Market participants have a growing understanding that collateral optimization is much more than an algorithm, but attaining an ideal infrastructure remains difficult.

There is broad consensus across financial institutions that operations and data are vital for collateral optimization, but firms working independently may not easily solve the problem. Recent market commentary has noted that:

- “Gathering and managing the data from multiple counterparties, clearing brokers, CSDs and custodians is complex and requires a sophisticated infrastructure. Given the advanced analytics and intricate algorithms required to manage margin and optimize collateral, that infrastructure is expensive to build and maintain. It requires an ongoing, and significant, investment that only grows as counterparty relationships multiply and volumes increase.” (J.P. Morgan)

- “Standardized data that is created, updated and disseminated on a real-time basis is a critical prerequisite for breaking down organizational silos and helping firms manage exposures and collateral settlement. Yet standardizing data remains a major challenge across firms.” (Cognizant)

- Increased collateral obligations and practices could include a single pool of collateral inventory, i.e. an ability to obtain a full overview of all of the firm’s existing collateral, in either an actual or virtual form.” (BNY Mellon)

A core problem is that infrastructure is expensive; it is typically not viable for each firm to build their own custom solution including APIs to every data source; collateral mapping tools to turn raw data into actionable insights; and interfaces to adjust data and collateral decisions on the fly. Rather, firms are turning to outsourced providers to manage these tasks.

External vs. Internal Infrastructure

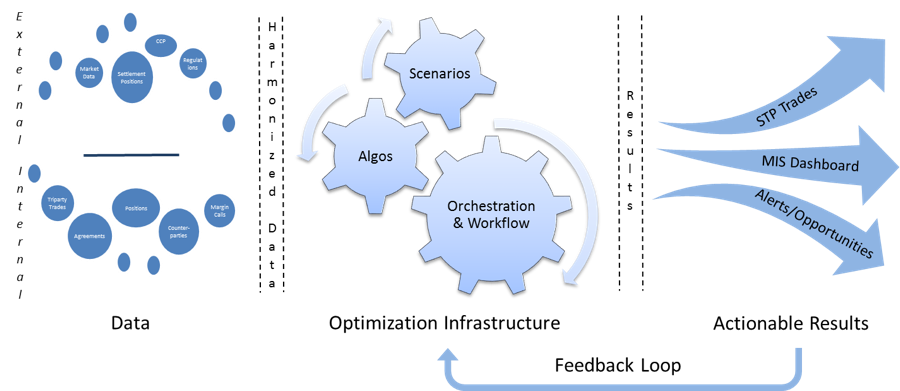

There are two sides to managing infrastructure for collateral optimization, one external to the firm and the other internal. The two are quite different and solve distinct problems. Externally, collateral utilities seek to aggregate all of a firm’s positions across custodians and Central Securities Depositories. However, the external approach is like looking at the outside of a big mechanical toy box and expecting it to solve complex data challenges. The external view takes care of everything outside the box. The next step is to get granular: what’s inside the box, what parts drive it and how does it work?

The internal approach to collateral infrastructure is breaking down what each position means: what ISDA collateral agreements are associated with which trades, or what collateral will counterparties to a specific trade accept? What is the best way to align supply and demand of high quality collateral and related term structure for best results in terms of economic and regulatory drivers? For CCPs, what is acceptable collateral? That information typically exists only within the organization; a collateral utility cannot solve the problem of maintaining that data and keeping it actionable for decision making purposes. But once that information is on hand, it can be deployed to make truly robust collateral and liquidity optimization decisions.

Algorithms vs. Infrastructure

Just as there is an external and internal view of data for collateral optimization, so too is there an important difference between the infrastructure for collateral and the models that run optimization logic. Which is more important? In fact, infrastructure comes first: models cannot function without the right data inputs.

Algorithms for collateral optimization come in many different forms, ranging from simple preferences and lists to complex multivariate analyses. They can consider not only what counterparts will accept, but also competitive opportunities to finance or gain revenue from the same collateral assets. At the very least, these models should allow users to save the best collateral assets for when they are needed most, for example US Treasuries retained as High Quality Liquid Assets or as collateral for counterparties that will only except these assets. In a best-case scenario, optimization analytics will include the opportunity to repo or lend securities for both profit and to generate cash for alternative investment purposes. It is very important to identify algorithms that are practical to implement as an economically optimal output may not be the best option when you overlay operational constraints and costs over the results.

In order for any of these models to work, the infrastructure must be present that supports the collection of all available data. For an institution with only one omnibus account, this infrastructure is as easy as a download from the custodian and market data feeds. Very few firms are this simple however, and as institutions grow, acquire competitors, diversify their geographic jurisdictions, and create multiple legal entities to solve different business objectives, so too do the number of account structures, custodial and CSD relationships. The only way for a diversified financial institution to truly optimize their assets is by capturing their data across all pools where it currently resides.

The idea of infrastructure and algorithms ties back to the idea of data mining: algorithms can best function when they have intelligent data to work with. Data that show collateral positions without intelligence on collateral acceptance criteria or term profiles for funding decisions mean that algorithms will need human intervention after producing results to ensure that no mistakes are made. On the other hand, algorithms that start with accurate data will generate results that are immediately actionable.

Collateral Infrastructure Supports Organizational Trends

More actionable results mean greater efficiency in the collateral optimization process, which translates into increased revenues with fewer manual interventions. While building out collateral infrastructure is good for collateral optimization, it also supports an important trend occurring at major financial institutions today. Banks seeking to promote Straight-through Processing (STP) need a robust collateral infrastructure, and that means more activities can be automated in the collateral and liquidity analytics process. One infrastructure across the bank also enhances the build up of Enterprise-wide technology architectures. As more banks move to the Enterprise model, the need for a single collateral infrastructure that can speak to multiple business-unit specific technologies becomes increasingly more important.

The same trend is occurring on the buy-side although more slowly as cost pressures have not yet forced the same types of realignments that banks are going through. Buy-side firms recognize that a breakdown of silos is in their best interest. As regulations force buy-side firms to be more transparent to the public on their collateral holdings, collateral infrastructure may provide solutions to both regulatory reporting and internal collateral management.

Transcend Street’s CoSMOS Solution for Collateral Infrastructure

At Transcend Street Solutions, we build out internal collateral infrastructures with our CoSMOS product. CoSMOS intelligently aggregates collateral information across business lines in real-time through its state of the art technology. CoSMOS mines collateral agreements across business lines to deliver a real-time view of opportunities in collateral. The process of data mining is complex, covering tri-party, OTC derivatives, CCPs and various other agreement types. This is typically where most firms have trouble in executing on their infrastructure requirements, and data mining is a good example of where industry vendors can help market participants surmount tough obstacles.

A full implementation of the CoSMOS product provides three key solutions:

- Harmonizing data across all collateral agreements, trades, repos, borrows, loans, positions, settlement ladders, margin calls and exposure data, reference data for securities, accounts, legal entities and market data. This is the core building block of optimization: knowing what an organization holds, what is required by counterparties and what opportunities are available with the available assets.

- Providing analytics and decision support, for example identifying where a collateral substitution or optimization process can result in quantifiable cost savings or new opportunities, or conducting liquidity analytics to show Sources and Uses of assets and collateral across the firm. CoSMOS provides multiple options for optimization algorithms: firms can choose from a simple liquidity preference algorithm to a more sophisticated linear programming model. In addition, firms can implement their own algorithms leveraging the power of CoSMOS data and its STP platform.

- Giving users control over information in powerful dashboards for taking action. This is where collateral-related activities and collateral optimization models can make a difference. Full Straight-through Processing is enabled via API based integration with firms’ business unit collateral systems to ensure that optimization output can be analyzed, tracked and processed. This automatically ensures that firms can realize the full benefits of optimization.

As more firms come to appreciate what collateral optimization can offer their institutions, the challenge of collateral infrastructure is taking a more central role in their thinking. Transcend Street’s CoSMOS solution provides a ready-made infrastructure for data mining, analytics and actionable decision-making. This is the infrastructure that results in robust collateral optimization.

About the Author

Bimal Kadik ar is a founder and CEO of Transcend Street Solutions, an innovative technology company focused on building next generation collateral and liquidity management solutions. Prior to founding Transcend Street Solutions, Bimal served in several senior roles at Citi Capital Markets Technology Division, where he managed global teams and projects with budgets of $500+ million in supporting multi billion dollar businesses.

ar is a founder and CEO of Transcend Street Solutions, an innovative technology company focused on building next generation collateral and liquidity management solutions. Prior to founding Transcend Street Solutions, Bimal served in several senior roles at Citi Capital Markets Technology Division, where he managed global teams and projects with budgets of $500+ million in supporting multi billion dollar businesses.

Bimal led the technology organization for Citi’s prominent Fixed Income Currencies and Commodities businesses globally. He was also tapped to build Prime Finance, Futures & OTC Clearing business technology platform as part of Citi’s push to expand these business areas. Many of these technology platforms have won industry accolades and client recognition, helping Citi grow in these businesses significantly over the years.

Bimal also led a high profile initiative of driving Collateral, Liquidity and Margin strategy globally at Citi, partnering with multiple business and cross- functional areas such as Global Treasury, Repos, Securities Lending, Prime Finance, Futures & Clearing, Margin Operations, Securities services and Technology.